Building Reliable Agents with Ironclad

Ironclad was one of the first companies to successfully deploy Gen AI in production, adopting LLMs even before ChatGPT was released. I recently sat down with Cai Gogwilt, Ironclad's Co-founder and Chief Architect, to understand how they overcame the initial challenges and what lessons product leaders can take from their success.

Subscribe to Humanloop’s new podcast, High Agency, on YouTube, Spotify, or Apple Podcasts.

Ironclad’s AI products are tools that help lawyers automate contract review and automatically negotiate common contracts. The AI assist feature lets lawyers configure “playbooks” of common clauses that their company is willing to accept and then Ironclad’s AI can automatically find the relevant clauses and negotiate the contract. They’ve also built a conversational agent that can act as a lawyer would within the Ironclad platform. It’s been enthusiastically adopted by their customer base including OpenAI.

Today, over 50% of contracts at their top customers are negotiated by AI. Even OpenAI themselves use Ironclad’s AI tools. Achieving this level of success with AI wasn’t trivial though and at one point they almost scrapped their entire AI agents project.

Cai’s key advice to product leaders starting out with generative AI today

1) Move quickly and take big swings

AI is evolving very quickly and it can be tempting to wait and see how things play out before making a commitment yourself. Cai warned that this was a mistake because of how disruptive AI is likely to be.

“If you’re right about it and you’re taking big swings, even if you’re wrong in a certain direction, then there's a good chance someone is next to you doing that same direction or finding that direction, who is going faster.”

2) Fit your AI features into a form-factor your customers are already familiar with

Lawyers aren’t famous for adopting new technology quickly. Ironclad was able to succeed despite selling to a traditionally conservative audience because they integrated AI directly into Microsoft Word where the lawyers were already comfortable. Cai says there’s a generalisable lesson here: don’t force your customers to learn a new interface and try as far as possible to fit your AI features into forms that customers are already familiar with.

3) Launch quickly and iterate using customer feedback

It’s crucial to get your product into users' hands as soon as possible to understand how they interact with it and uncover unexpected use cases. Cai emphasized that you won’t fully grasp the potential and limitations of your AI until you see it in action with real users.

“Your users will show you capabilities that you didn't realize the LLM had.”

By launching early and often, you can gather valuable data and feedback to refine and improve your prompts and model iteratively. This approach helps ensure that your AI applications are not only reliable but also aligned with user needs and preferences.

Most companies today are building Retrieval-Augmented Generation (RAG) systems but Ironclad went straight to AI agents. Cai felt strongly that this was the future and that RAG is a temporary solution. He wanted to make sure that Ironclad stayed ahead of the curve. This worked well initially but when they tried to add more than two tools to their initial agent it became unreliable. They were about to abandon the project when one of their engineers developed a new internal tool called Rivet.

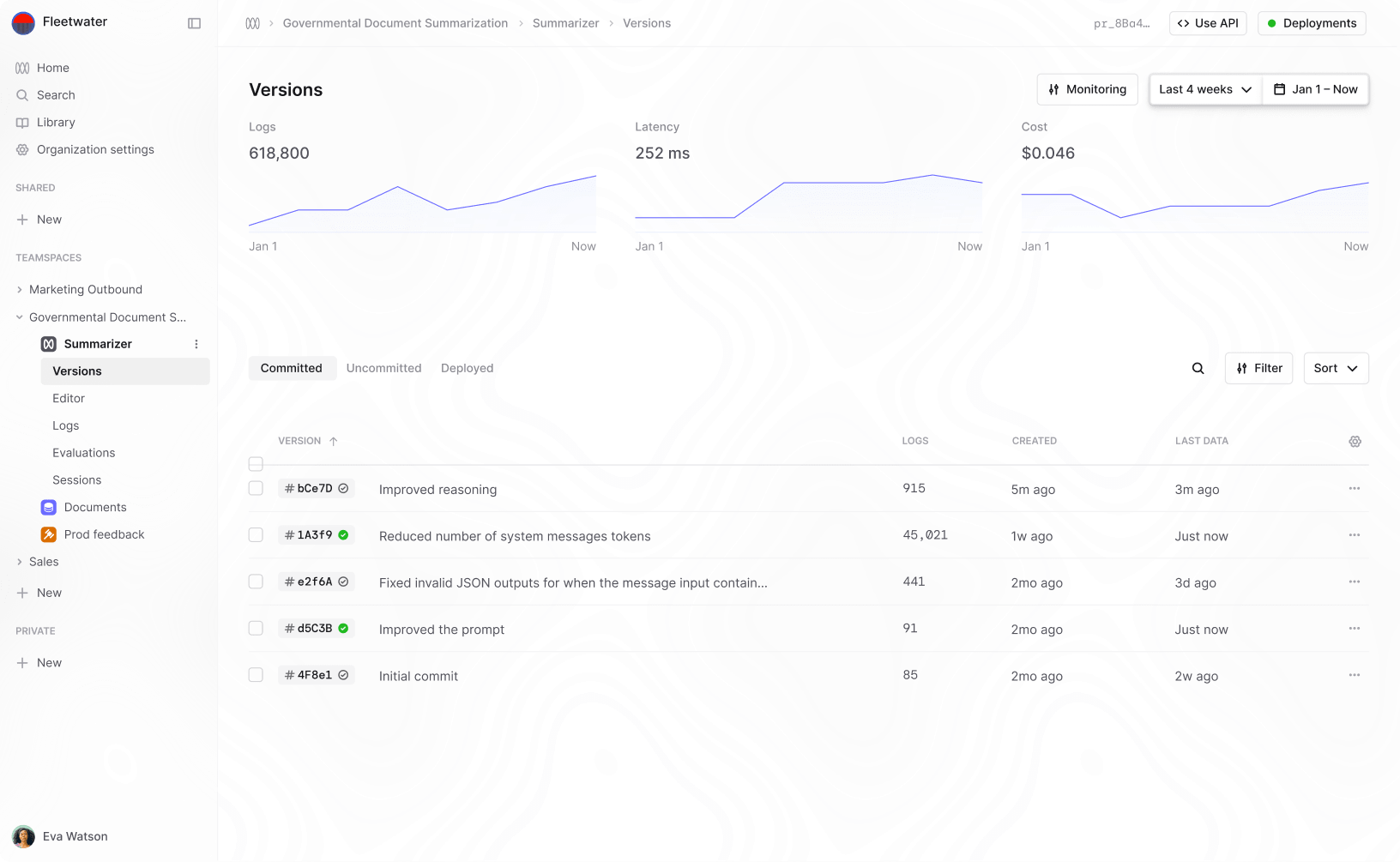

Rivet is Ironclad’s open-source AI agent builder. Cai’s a big believer that if you want to build robust AI agents you need tooling that helps with evaluation, debugging and iteration. Rivet is a visual programming tool that lets a developer map out an agent and then see how the data flows as the agent runs. This makes it much easier to understand where things are going wrong, to debug and to improve over time. Cai said that without good tooling for evaluation and debugging it would have been impossible to get their AI agents to work.

“I was staring at logs and pages of console logs. Being able to visualize that and connect to a remote debugger... suddenly, things started to click."

Rivet now has a big and active community of contributors. If you’re looking for a hosted tool that can help with evaluation and debugging as well as collaboration on prompt development and management then we’d also love for you to check out Humanloop.

Ironclad’s experience demonstrates that to successfully launch an AI product, companies must take bold steps, integrate AI into familiar user interfaces, and invest in robust tooling for debugging and iteration. Cai emphasized the importance of moving quickly and decisively. The fast pace of AI development means waiting can result in being outpaced by competitors. Fitting AI features into existing workflows helps with adoption making it easier for traditionally conservative users, like lawyers, to embrace the technology. Finally, having effective tools for evaluation and debugging, such as Rivet or Humanloop, is crucial for refining AI agents and ensuring they work reliably in production.

Transcript

Chapters

- 00:00 Introduction and Background

- 02:08 Ironclad's Journey and Early Adoption of AI

- 03:25 Generative AI Features: AI Assist and AI Agents

- 05:58 User Interface and Interaction with AI

- 07:36 The Evolution of AI Models and Possibilities

- 09:03 The Impact of GPT-3 and the Journey to Launch

- 14:35 Customer Reactions and Adoption of AI

- 21:29 Lessons for Designing AI Products

- 23:48 Engineering Challenges and Internal Tooling

- 25:34 Exploring RAG and REACT for AI Applications

- 26:02 Transition to AI Agents

- 27:00 Challenges with AI Agents

- 28:59 Introduction of Rivet

- 31:43 Benefits of Visual Programming

- 33:59 Reasons for Open Sourcing Rivet

- 40:57 The Future of AI Agents

- 43:45 Importance of Launching Early and Often

- 48:30 Speculations on the Future of LLMs

- 49:33 Impact of AI on Legal Work

- 52:55 Advice for Companies Starting with AI

00:00 Introduction and Background

Raza Habib: Hi, I'm Raza, one of the co-founders of Humanloop, and today I'm joined by Cai Gogwilt, one of the founders and the CTO of Ironclad.

Cai Gogwilt: Thanks for having me.

Raza Habib: I'm quite excited to go on the journey of how you guys have gone from initial ideas to robust deployed production AI systems, and even agents. But do you mind just giving us a little bit of color on that journey? I think you recently had your nine-year anniversary at Ironclad.

Cai Gogwilt: Yeah. Thanks for having me on. So maybe just to rewind all the way back to the beginning. I studied computer science and physics at MIT. After that, I worked at a company called Palantir, working in the kind of defense space. After leaving Palantir, I found myself very interested in continuing to work on software that actually helps people be more effective at what they do from a work perspective. That's how I started getting into legal technology. I talked to a few lawyers and really liked how they think about contracts, which are very programmatic. You're trying to anticipate edge cases that might happen. The more I talked to lawyers, the more I was excited to learn more about the space. I asked them to introduce me to more lawyers, and so on and so forth. I haven't stopped and, you know, it's been almost 10 years now. So, that's kind of my story of how I got into this.

02:08 Ironclad's Journey and Early Adoption of AI

Raza Habib: I imagine it feels like the time's gone very quickly, but the rate of change in AI in that time has been super fast. And I know that you guys were early adopters of traditional machine learning methods and then more recently LLMs. Do you mind starting with just telling me what are some of the things that you guys have built with AI more recently? What are the features? What does that look like if I'm an Ironclad customer?

Cai Gogwilt: I love how we now refer to this as traditional AI, even though it's like...

Raza Habib: Well, all right. I agree. I suppose like machine learning, I think of as traditional AI. And I think of LLMs as like gen AI or foundational models. You're right. There was also GOFAI before that. And every month it feels like something new is out.

Cai Gogwilt: Yeah. Yeah. I haven't found a good term. I've also said traditional AI a couple of times, but I wonder if AI might be like a slight rebrand. But yeah, in answer to your question, most recently, we've been releasing a ton of new generative AI features. I think our first generative AI feature was AI Assist that we launched in January of last year. So basically assistive contract editing. As a lawyer, you're often faced with contract negotiation and actually it turns out you negotiate the same contract over and over again, just with slight variations. And so what we released is a series of features in contract negotiation that actually allow the lawyer to configure what position their team is comfortable with and then use a one-button click to get generative AI to make the modifications to bring the contract into compliance with those pre-specified positions. So we released that back in January. It's been taking off. A healthy proportion of our customer base is using that feature to auto-negotiate contracts.

Cai Gogwilt: There's a good chance that if you're on the other side of contract negotiation, it's been automatically negotiated on the other side by Ironclad.

Cai Gogwilt: And then even more recently than that, we've actually delved deeply into this area of AI agents.

05:58 User Interface and Interaction with AI

Raza Habib: I'm definitely keen to dig a bit deeper on that point. On this first one of this kind of like assistant review, what does it actually look like for the end user? So I'm a lawyer. I've got my contract in there. How am I interacting with AI? Do I just pre-configure it once and then press a button, or is it a chat-like interface? What's the UI of this?

Cai Gogwilt: The UI is more like a document editor, like Google Docs or something like that. So you have the doc on one side, and then you have what we call your playbook on the side panel, and the playbook is just telling you what's wrong or right about the contract. And then either as you edit the contract directly in the editor, or if you click a button that tells it to correct this particular stance, then all of a sudden, everything will auto-update and everything will run. And I think the AI playbooks and AI Assist functionality is really cool because it's using that conventional AI that we were talking about, as well as the generative AI. Trying to create something that's greater than the sum of its parts by combining the best of what conventional AI has to offer with the best and the promise of what generative AI has coming next.

07:36 The Evolution of AI Models and Possibilities

Raza Habib: Do you mind just double-clicking on that a little bit more? What is the balance there? What's the conventional part doing and what's the generative part doing?

Cai Gogwilt: The conventional part is spotting the issues. So, you configure what positions you want in a legal contract. We have a lot of conventional AI models that we've trained in-house using all sorts of conventional techniques, and we have a really good sense of what the accuracy level is there. We have confidence scores and things like that, things that we're starting to find analogs to in generative AI but maybe we don't have quite there yet. So the conventional models are recognizing things, giving us a ground truth on what our confidence is that we are in compliance with our playbook. Whereas the generative AI is doing the creative task of modifying the text to get us into compliance. Which is then checked by the conventional models to make sure it's actually what we thought it was going to be.

09:03 The Impact of GPT-3 and the Journey to Launch

Raza Habib: That's really interesting. So making heavy use of a hybrid classifier-generator system to get it to be reliable. That's super interesting. How plausible would it have been to build a system like this two years ago? I think I know the answer to that question when I'm laying it up. So the reason I'm asking is like, for people who maybe have not been as close to this, what's changed? Why is this possible now, and it wouldn't have been possible two years ago?

Cai Gogwilt: Oh my goodness. Yeah, thank you for the layup of a question. Absolutely. Two years ago, this would have been completely unthinkable. We actually started Ironclad. The name of Ironclad originally was Ironclad.ai, and we had this feeling like AI is going to change the way legal work is done and it's going to happen in three or four years. So we're going to start building now and we're going to lay this foundation and three or four years from now, AI is just going to take off. Natural language processing (NLP) is going to be breaking new ground. And 10 years later we were right. But yeah, no, we've been laying that foundation for so long now. AI has shown more and more promise with, you know, like, what is it, fast text in 2017 or something and starting to get that popular and then transformers, and then suddenly, LLMs and the generative side of things.

Raza Habib: ChatGPT, I think, was November or December 22.

Cai Gogwilt: Okay. Yeah. So I think it was August 22, maybe September 22. Someone from our legal engineering team came to me and said, "Hey, you need to check out GPT-3. It's definitely different from the last time we checked it out."

Raza Habib: Around the time that you and I first spoke about this as well, right?

Cai Gogwilt: Yeah, yeah, yeah. I'm very impressed that you pivoted Humanloop towards that so quickly. It was very prescient in hindsight. But at any rate, yeah. So one of our legal engineers came and said, "Hey, check out GPT-3." Our entire legal engineering team was kind of showing things to each other and really impressed by the technology. Our legal engineering team basically has people who were former lawyers, former programmers, former consultants, and they help our customers implement our technology, integrate with other technology. These are people who, when they say this is really impressive technology, I listen. I'm like, okay, I've got to check this out. So I took a look at it. It was actually doing some very crazy stuff and tested it out on this system, this product that we were starting to work on already, the playbook negotiation feature. And realized, okay, cool. The top ask that our beta customers have for this feature is like, great. It's recognized the issues now. Can it just fix the simple ones? I was like, no, no, this is like 10 years away. And all of a sudden, there it was in OpenAI's playground, just kind of happening in front of me. Which was absolutely wild.

Raza Habib: We all have a story like that now of taking one of our favorite problems, maybe something that we'd tried a couple of years earlier, or in my case, it was something that I'd read an entire PhD thesis about and then running it through GPT-3 and just watching it work first time.

Cai Gogwilt: Right.

Raza Habib: I think if you're outside of AI, people spot all the mistakes. I remember showing my family ChatGPT for the first time. It was a little bit early, and them basically being like, so what? It wasn't impressive because they didn't know what the state of the art was the day before. But yeah, it's interesting to hear your version of that because for me as well, I think there's definitely a moment of aha, kind of light bulb moment. How long was it from that first conversation?

Cai Gogwilt: Can I ask you a question? I want to share this Turing test moment that I have. Have you ever had that Turing test moment where you're interacting with an LLM and you're like, hold on a sec. Is someone on the other side there? Have you had that moment or not really?

Raza Habib: I haven't, but there is a website that's actually trying to run the Turing test at an enormous scale. I'll see if I can dig it out for the show notes. I can't remember the name of it right now, where you log on and you're basically playing a competitive game. In each round, the goal of the game is within a minute, can you tell whether or not the person you're speaking to is an AI? And some fraction of the time it is, and some fraction of the time you're being paired with someone else. I think if you're sufficiently good at prompt engineering, if you're like you or me, and you probably spend many hours a week on this, you know the failure modes. So you can find it relatively quickly. I always just ask them to multiply two very large numbers together. But outside of those very distinct things like, yeah, let's multiply big numbers together. You're right. It's hard.

Cai Gogwilt: Well, I actually had this moment early, right around, I think right before ChatGPT came out. I had this moment where I was playing around with the API, and I kept using the same test case over and over again, like change governing law to California. And I was connecting the UI up and I hadn't written unit tests because I'm not great about that. And I was just sending the same API request over and over again, like change governing law to California. One time it came back, "Does anyone at this company give a fuck?" And I was like, oh my god, someone at OpenAI, like one of my friends at OpenAI is monitoring my API requests and just pulling my leg. But obviously, that wasn't the case. I just had temperature set to 200 or something like that. But yeah, that was my Turing test moment. It was late at night, so maybe I wasn't thinking properly, but I literally thought that I was talking to another human or that a human had intercepted this API request and decided to pull my leg.

Raza Habib: Yeah, and it's amazing that those moments are happening more and more often, right? I think the first version of this was when Blake Lemoine resigned from Google because he was convinced that Lambda was sentient or whatever. And when you're passing the Turing test so hard that people are losing their jobs over it, that's a real step change in technology. But maybe I can bring us back to Ironclad and the next question I had is, okay, the legal engineering team comes to you, they show you this impressive demo. We all have this first light bulb moment. How long was it from, wow, this is really cool, to actually having something launched that is rewriting the clauses for people in production? What was that journey like?

Cai Gogwilt: I think it was August 22, maybe September 22. Someone from our legal engineering team came to me and said, "Hey, you need to check out GPT-3. It's definitely different from the last time we checked it out." That journey wasn't too crazy, I think. So August we started playing with the APIs, or maybe it was August that InstructGPT happened. And I feel like after InstructGPT, suddenly it just became much easier to understand how powerful this was. By December we had a couple of customers using it. Conveniently, OpenAI is one of our customers, so we thought that they would probably react pretty positively. So we put them into our alpha or beta program. So from let's call it August to December, from first discovering that the APIs can do something to actual end users using the technology. We didn't make it generally available until April, but there's a whole host of reasons for that as well.

Raza Habib: And what was that first MVP version like, the same product you told me about at the beginning, or was it something smaller first?

Cai Gogwilt: It was pretty much that. There's been a lot of, I mean, as you know, there's a lot of iteration that goes into software. And so I think the problem statement that it solves and the description of it in the abstract is the same from April of last year to today, but there's been a lot of work to make it much more usable and much more powerful, give the users a better ability to control how the AI responds and also tune the AI to come up with better responses.

Raza Habib: And what has been the response from lawyers? So when a lawyer uses it for the first time, how do they react?

Cai Gogwilt: I've seen the entire spectrum of reactions. There have been people who are just like, this is amazing. I'm going to use it for everything. And literally do use it to negotiate. I think there is one big customer we measured that used it to negotiate 50 percent of their incoming contracts from a pretty large volume.

Raza Habib: All the contracts that they're negotiating are now being negotiated or drafted by AI.

Cai Gogwilt: Yes. Yeah. I mean, people are talking about the internet being composed of mostly AI-generated content very soon or already. I think the same might be true about contracts pretty soon as well. But yeah, I've also seen the opposite reaction of like, I will never ever use this. Although one person that I spoke to had a great reaction that kind of encapsulated everything in one go. He said, if I recall correctly, "I will never ever use this AI negotiation feature except when it's the end of the quarter. Then I'm going to use it all the time." I think that actually encapsulates probably most people's feeling about it. But I do think that there is this wide spectrum of how people respond to AI products nowadays. I think that early adopter to late adopter and early majority bell curve is just playing out every single day. There are users on the early majority, early adopter side for Ironclad who are telling me about things that they're using Ironclad AI for that I had no idea were possible. And then there are people who are like, "Nope, shut it off. Shut it all off. Make sure that you have this toggle where you can turn AI off entirely for our company."

Raza Habib: That's one that I'm kind of curious about, which is that obviously it's one of the more sensitive industries in which to be deploying AI. People are very sensitive about their contracts. They're worried about people training on their data. They're worried about mistakes, right? The stakes can often be quite high. So you might have thought that actually legal contracts would have been one of the later places to get people to trust this. How have you guys overcome that? Because I suspect it's going to play out in every industry that you have to win over the trust of your customers and get them to be comfortable with the idea that an AI is generating some of this stuff.

Cai Gogwilt: I think part of what helped us here was being very thoughtful around our first product launch with generative AI. The way we implemented this AI negotiation feature was very user-centric. The user can see the reasoning behind why the AI thinks the contract is wrong. And then the user is given this very familiar UI for reviewing the changes that the AI made. Every lawyer is deeply familiar with track changes in Microsoft Word. And so we put it directly in that interface for them. So that kind of helped breed trust at the beginning with our users. And my thinking is that actually sort of helps trailblaze for legal tech in general. Because suddenly here's everyone's saying generative AI is useless and then it's like, well, no, but like Ironclad has a bunch of customers who are doing incredible things with it and have really good reason to trust it. And then you look at a demo video or something of what we put out there and it's pretty clear how, even if the generative AI is not correct all the time, there's this human review step where the AI plus human are better than either individually the human or the AI. So that certainly helped us. Maybe the other thing that's helped us is the field of law is interestingly right in the crosshairs of what large language models are capable of.

Raza Habib: It's a weird bit of intersection because it's simultaneously somewhere where the model capabilities are very strong, but where the stakes are very high and where trust is often difficult to win. So there's a sort of counterbalance.

Cai Gogwilt: Yeah, yeah. So yeah, I think adoption within legal of generative AI has interestingly, and maybe for the first time in history, put lawyers at the forefront of adoption of new technology.

Raza Habib: Yeah, that is interesting. And do you think there's any generalizable lessons there? Like, if I'm a product leader trying to design one of these products and I'm trying to make sure that my users trust it, what are some of the things I might think about in how I design it to maximize that probability?

21:29 Lessons for Designing AI Products

Cai Gogwilt: Yeah, absolutely. I think there's a ton of UI work that needs to be done and new interaction patterns that are starting to come up. Maybe a lesson that we could take away for generally moving into a new industry with generative AI is to fit it into a form factor that people are familiar with, that experts in the area are familiar with. We built out a Microsoft Word compatible, browser-based editor. And so it was very natural for us to fold generative AI into that format. But obviously, we built that out over the years in order to give our users a feeling of familiarity when they were interacting with our system. And so building on top of that, we were able to slot generative AI into an interaction pattern that felt familiar to them.

That being said, I continue to wonder about what... Claude 3 came out, starting to see what the capabilities are there. Who knows what GPT-5 will be? Who knows? Google has their Gemini Ultra that none of us have access to yet. And so I continue to wonder how much this human oversight is going to be necessary. But for now, absolutely with all the frontier models that are available to us, that kind of interaction pattern and that familiarity is a very important thing to give to users when they're first experiencing this.

23:48 Engineering Challenges and Internal Tooling

Raza Habib: Cool. And I'll definitely circle back near the end to that question of how good the models might get, but maybe for a moment, that's super helpful background on the customer implications of this and what the product looks like for lawyers. I'd like to go nerdy for a little bit now and chat about some of the engineering details and the team that built this and how you guys got there.

First question is just like, what were the challenges along the path? What was surprisingly hard? What was easy? I know that I would like to chat a lot about some of the internal tooling you guys have built. And I'd like to hear the backstory of what got you to that point or what motivated it.

Cai Gogwilt: After we launched the initial version of AI Assist, we started to think more broadly about what these capabilities were. And we were really inspired by some of the blog posts coming out of LangChain. I don't know if you remember the chat with the LangChain documentation blog post.

Raza Habib: That PDF was a huge wave for a little while. Everyone had some version of talking to documents.

Cai Gogwilt: Yes. And I feel like RAG had a comeback just a few months after that as well, with all the vector databases and database companies being like RAG. But yeah, we looked at that and...

Raza Habib: Just for people who might not know, do you want to give the 101 on RAG briefly?

Cai Gogwilt: Absolutely. RAG, or retrieval-augmented generation, is this technique whereby you can put in a large amount of text that the large language model wouldn't be able to otherwise process, and then use embeddings to pull out the most relevant context, retrieve the most relevant context, and then give that to the LLM to answer a question based on that context. I've heard it referred to as expanding the context window of the LLM, which may be less and less necessary with the existing context windows.

Raza Habib: Some combination, I guess, of search and generation, Perplexity being the prototypical example. But maybe that RAG system didn't work for you guys. Yeah.

Cai Gogwilt: It worked fine, actually. Seeing that RAG was this great solution and seeing so many solutions being built around it, we thought, this will be great for our customers. So let's start building it. But instead of just building RAG, let's actually go further. And we were also inspired by the React paper and this idea that LLMs could learn to use tools. And so we started working on AI agents instead of working on RAG.

25:34 Exploring RAG and REACT for AI Applications

Raza Habib: You leapfrogged all the way.

Cai Gogwilt: Yeah, we were kind of like, okay, when you're building in a more sensitive environment or a more enterprise environment, you can't release things just immediately. And so we tried to think, okay, what's that one step further so that when we decided to release our next feature, the rest of the world will be there with us as opposed to three months ahead.

26:02 Transition to AI Agents

Cai Gogwilt: So we chose to go with AI agents. At first, it went swimmingly well, we were building this conversational interface, this chat interface, and it was doing the React thing, looking at making an API call and then like, if there was an error, trying again and stuff like that. And then we tried to add a third functionality, and all of a sudden it just started going haywire. I was like, I don't know what's going on here. I was staring at logs and pages and pages of console logs.

Raza Habib: Who was working on this at this moment in time? So, help me understand, how many people is this? So you personally, as the CTO, are deep in on this project, which I find interesting in and of itself, but who was with you? How big was the team?

Cai Gogwilt: The team at that point was just two of us. It was just me and Andy. The AI Assist and negotiation functionality was being handled by an amazing team of probably about five people, six people.

Raza Habib: And what's the makeup of that team? Is it a traditional ML team? Is it more generalist software engineers? Who did you put on this?

Cai Gogwilt: A couple. So we had a team. Let's go with conventional ML. Someone from our AI platform team was deeply involved in it because, again, it was kind of marrying conventional AI with generative AI. And then, Jen, a fantastic product manager, Angela, a fantastic product designer, and three engineers, Catherine, Wolfie, and Simuk, if I recall correctly. So yeah, about six people working on the negotiation side of things.

Raza Habib: Okay, fantastic. Sorry to interrupt. I'm just always curious as to the team makeups and how they're evolving. Maybe we can come back to that. So you were about to give up and Andy comes to...

27:00 Challenges with AI Agents

Cai Gogwilt: About to give up. Andy's spinning up on the project, and he comes to me on Monday and he's like, okay, I know you said we're going to stop on this direction, but hear me out. I refactored it all into this new system, Rivet, that I built over the weekend. It's a visual programming environment.

Raza Habib: I had exactly the same reaction when you first said it to me. I was like, visual programming, really?

Cai Gogwilt: Someone gave me some really good reasons for it. Someone in our Rivet community made a post about how there were all these analogs. My guess is it's sort of like with LLMs, it's sort of like stateless and functional. Data flows from one direction, goes through a few iterations, and then ends up transformed in a different way. Being able to see that transformation is actually pretty nice. Many of us like functional programming, myself included. But I do find that debugging functional programming can be pretty tricky, especially in languages not designed for functional programming. We were basically running into the same thing with LLMs. Chaining more than two LLM calls together, all of a sudden, you're like, which of my prompts is messed up? Which of the LLM calls is making things go haywire? Being able to visualize that and connect to a remote debugger, Rivet's remote debugger to the actual agent system allowed us to pinpoint, oh, that's where it's going very wrong. That chain of thought thinking is causing it to then make crazy haywire calls to our APIs and stuff like that.

28:59 Introduction of Rivet

Raza Habib: So Andy comes to you with Rivet, it's a visual programming environment. He's like, let's not give up on the project. What happened next?

Cai Gogwilt: What happened next is I looked at this refactor that Andy had done, never having used Rivet before, and I was like, okay, I'll give it a shot. I tried to do the thing that really kind of broke my mind the week before, which is add just one more skill to the agent. Lo and behold, it was actually really easy to do that. Then I went into one of the skills that had been very difficult to build. I started making some modifications to it, specifically trying to make it faster. Suddenly, things started to click. I was like, oh, I now understand why by adding that skill, I kind of screwed up the routing logic. This is before GPT functions. When I was trying to make something faster, I had this idea for doing parallel tracks in terms of a fast and a slow thinking method. Being able to visually see the fast and the slow track happening at the same time and then coming together was so much easier than I thought it would be having tried to do this in code before. That experience over the next week made me realize, oh wow, this is really game-changing. This is really going to help us actually deploy our AI agent and develop it much more quickly. Then probably a week or two after that, we decided we wanted to eventually potentially open source this and make it useful to other people.

31:43 Benefits of Visual Programming

Raza Habib: Why choose to both build it internally yourselves? Because I feel like there's increasingly a large market of different types of tooling emerging to help people build with LLMs. You've got us for evaluation prompt management, but there's any number of flow-based tools. There's a lot of things that are Rivet-like out there. So I guess that's kind of one question. It's not your core competency. Why did you choose to build something in-house? And two, and maybe the two are related, why open source it?

Cai Gogwilt: There are two answers to that question. The first is, honestly, marketing. Open sourcing Rivet felt like it would at the very least get Ironclad into the conversation around AI. It seems to have done that. There was also a secondary goal, which was, if this open-source launch goes successfully, we can start to accelerate the conversation about AI agents in the community. At least when we were starting to work on this, people were starting to say, okay, this is never going to work. Just do RAG and Q&A on your doc. As part of that, we were excited to create an open-source Switzerland in terms of neutrality, a library because we were observing a lot of startups trying to capture the LLM ecosystem on their own infrastructure. For us, that would have been untenable. Running our customers' data through a five or six-person startup's infrastructure would not be okay. Our biggest customers would laugh us out of the room if we asked if we could do that. We wanted to set up Rivet as potentially an open-source alternative to running your infrastructure on some smaller startup's servers. Not that we have anything against the smaller startups, more just like we don't want to fall behind. As an enterprise SaaS company, we wanted to make sure that the industry started to move towards a direction that allowed other enterprise SaaS companies and ourselves to launch frontier cutting-edge generative AI features. That was another reason, trying to make Rivet a neutral alternative. That's part of why we developed a pretty rich plugin system so that different LLM providers and tooling providers could plug into it very easily.

33:59 Reasons for Open Sourcing Rivet

Raza Habib: This idea of a Humanloop-Rivet integration of some kind, I still haven't ruled it out in my head because I do think there's very complementary functionality between the two systems. One question, one thing that struck me about it is that you chose to go straight for TypeScript. A lot of the initial tooling in the LLM space has been Python-based. I wondered if you thought that was indicative of the type of person who's building AI systems having changed, or am I reading too much into that?

Cai Gogwilt: You are right to point out that Rivet is TypeScript native and works really well for people in the TypeScript ecosystem. It wasn't a reaction against Python so much as... the TypeScript community is pretty large. When we launched Rivet, I was honestly surprised at how lacking the TypeScript ecosystem was in the LLM space. We had to build a surprising amount of stuff from scratch that in Python was just an off-the-shelf module that everyone agreed on. We've had to experiment a little bit there. I think it's getting a little bit better lately, but it's taken a while.

40:57 The Future of AI Agents

Raza Habib: My slight theory on this is that ML and conventional AI, as we're calling it, was pretty mathsy, relied on these autodiff libraries, TensorFlow and PyTorch and others, and NumPy and all these things. So Python had become the dominant language for machine learning things. LLMs have changed who can build with AI again and democratized access. I saw you guys building it in TypeScript as one indication of that. This is actually for not just ML engineers who did a math degree or something, but for a generalist engineer who is interested in it and can build really sophisticated AI products.

Cai Gogwilt: That's an even better thesis. Let's go with your answer. But yeah, it's true. I feel like TypeScript has been interesting overall for the development ecosystem because you can build isomorphic code. It's fun to have the same language on your server side and on your client side. But I think actually just allowing your engineering team to be conversant on both sides of the stack that traditionally have been pretty separate has been a game changer. So to bring TypeScript into the LLM stack as well, so that you can have people operating across the server side, the client side, and the LLM side, is kind of a game changer in terms of coming up with new interaction patterns and delightful user experiences.

43:45 Importance of Launching Early and Often

Raza Habib: I have a few more different topics I want to ask you about, but just before we move past Rivet, do you want to make the 10-second pitch for people who might be thinking about using it and where they can find out more?

Cai Gogwilt: Yes, thank you. Rivet is a visual AI programming language. You can find out more on rivet.ironcladapp.com. There's a whole documentation and tutorial site. There are also a bunch of community members who have been making some fantastic tutorial videos that showcase how to use Rivet and integrate it into your TypeScript application, as well as illustrating some cool new techniques around how to use LLMs.

Raza Habib: Fantastic. It leads very naturally to the next thing I wanted to ask you, which is the thing that Rivet enabled for Ironclad was building with agents. I've seen you write a couple of articles recently on the new rules of AI development, and one of them was that agents are the future. This has been more controversial in some audiences than others, right? Some people are all in on agents. Some people think, oh, they're not reliable enough to trust in production. There's cost issues because they call the models multiple times. There's latency concerns if you don't know how many times models are going to be called. What's holding agents back? Why are you so bullish on them? What are people who are maybe more bearish getting wrong?

Cai Gogwilt: In my mind, agents are predicated on this idea that LLMs can not only read text and write text but also reason about it. If you believe that, then I feel like you need to be bullish on agents because reading, writing, and reasoning are the core skills that humans claim dominance over other species on, right? Oh, tool usage, right? Of course. I forgot about tool usage. But that's also part of agents. I think if you accept the premise that we're seeing LLMs using tools and reasoning about how to use them, then you have to accept the premise that they're a big deal.

Raza Habib: That could be very future-looking though, right? What makes Ironclad special in my mind in this space is you have agents in production. It's not just a future-looking thing for you. You've actually made it work practically. I'm curious why you think others are failing there or what are people getting wrong.

Cai Gogwilt: I don't have to remind you, but I've seen some tweets from other people. There is a publicly accessible agent-based application called ChatGPT that a lot of people are using. So yeah, I think I forgot who I was talking with about this, but we were looking at some tweets that said things like, when are AI agents actually going to be ready for use? It's like, wait, you can log into ChatGPT and you can use agents. Those are agents. Certainly, I think there's a moving bar for how good agents could be, and we're pushing the envelope here. But I think from my perspective, it's not a question of when are agents going to be ready or when is it going to be time for agents. It's a question of how capable are they today and how much further can we push them with today's frontier models and how much further are they going to go with tomorrow's frontier models.

Raza Habib: One other tip that you had was to launch early and often in a more extreme way than you might normally. I'm curious, why is that especially true for LLMs? That's been a maxim in software development anyway. So what's different here?

Cai Gogwilt: What's different here is that no one has any intuition on how these things work. Maybe a broader meta point is another part of the reason we open-sourced Rivet. Part of the reason I've been writing so much about working with LLMs is because I think it's really important for us to share our work right now to push where LLMs and generative AI development can go. There's this pressure to know everything about the latest technique or which LLM providers are using a mixture of experts or what the hell mixture of experts is and things like that. There's this pressure to know everything. But the truth is that... maybe I won't speak for you, but it feels to me like the truth is that no one really knows what's going on here. No one has a full understanding of where we are, what the state of the art is today, how these things are truly operating, and certainly not where they're going tomorrow. What are they going to be capable of? I think I've heard stories about people making bets with each other about what the next model is going to be capable of and stuff like that. So that's, going back to your question, that's part of why releasing early and often is especially true in an LLM setting because you have no idea how this is going to work. You have no idea how your users are going to interact with it. Without that user interaction, all you have is a fun little demo that is probably pretty cool but may not work very well for anyone but you as a user.

Raza Habib: Would you say it's fair to say that it's by launching early, gathering user feedback, gathering evaluation data, seeing how well that's working, and then running this iteration loop again and again, is actually how you get to a reliable AI application?

Cai Gogwilt: Yeah, definitely. But it's actually even more than just a reliable AI application. Your users will show you capabilities that you didn't realize the LLM had.

Raza Habib: I think my favorite example of this is going back to the contract negotiation side of things. I was asking a user how they used this contract editing UI that we'd set up. And he told me that he would highlight it and then ask it for a list of things that he would not like about that clause. The UI didn't say that. The UI was like, how do you want to change this? And he was going ahead and using it a completely different way. But in saying that, revealing an incredibly important use case that he was getting a lot of mileage out of that we would otherwise not have known about. And we're seeing even crazier things with our conversational agent. People using the interface in Japanese. We didn't set it up to be able to communicate in Japanese, but there are a couple of our users who prefer to interact with it in Japanese, which, great for them. You learn so much from your users. You can figure out new use cases, or they can figure out the new use cases for you, which is truly fascinating. You kind of get that in more traditional, non-generative AI software, but it's less surprising with the generative AI thrown into the mix. You get some very surprising results very quickly.

48:30 Speculations on the Future of LLMs

Raza Habib: There are so many more questions I want to ask you, but I can see we're coming up for time. Maybe I can finish on a few quickfire ones, and perhaps in the future, we can get you back on. Because I definitely feel like there's a second hour of questions in here. One of the things that we've skirted around a bit is you mentioned the bets within OpenAI or Claude 3.0 model, and what might GPT-5 be like. Do you think the anticipation is overhyped, underhyped? Are we close to AGI? What's your personal bet on how quickly things will change?

Cai Gogwilt: My personal bet is that things will change pretty quickly. Where will GPT-5 be? Hard to imagine, but I think that it's going to require less and less human oversight to do more and more incredible things. Will we achieve AGI? No, I don't think so. Because I think we will consistently, as humans, move the goalposts on what AGI is so that no system that we build until it actually takes over humanity will be considered AGI to us.

49:33 Impact of AI on Legal Work

Raza Habib: Interesting. What do you think the implications are specifically for your industry? If it is the case that today, 50 percent of one company's contracts are being reviewed by Ironclad's AI system and GPT-5 is going to require even less human review and be able to do more, what does that mean for your customers?

Cai Gogwilt: I think it's going to completely change the landscape of legal work and what our customers and users do on a day-to-day basis.

Raza Habib: Will I just be saying, have my AI negotiate with your AI? What's going to be happening?

Cai Gogwilt: Why not? Yeah. We haven't had that happen yet. I should look that up actually, whether we've had two auto-negotiated contracts.

Raza Habib: If that's true, I will add that to the show notes. I would love to know whether two Ironclad customers have basically put their AIs head to head.

Cai Gogwilt: I'll look into it. I guess the percentages make it likely that that would have happened by now. I'll look. In terms of the future of legal work and the impact of AI, I think it's going to fundamentally change how people work on contracts and legal work. But I think there's also this narrative of, I think there was a stat like 44 percent of legal jobs are going to be eliminated by two years from now or something. I don't think that's going to be true because I think what we end up having is... there's this capability arms race. Legal work is often two-sided. There's someone defending and someone attacking. Ultimately, you end up in litigation. I think what happens right now is that a lot of legal teams prioritize their work and focus on what could go wrong and how do we protect the company. They don't need to focus on this long tail of things that might go wrong but are very unlikely to. Meanwhile, people who are trying to take action against companies are trying to go after what the most likely things are but are not necessarily going to find the long tail. I think what happens with generative AI in the legal world is it empowers both sides. It empowers the people who are trying to find faults and attribute fault. It allows them to explore that long tail very effectively. This requires the people who are defending the entities from fault to have to focus on that long tail and solve that long tail. The only way to do that is going to be with generative AI. I think it ends up being a little bit circular. This ends up being potentially for humanity how things work. As a human society, we'll start to attribute more value to work that is harder to do with generative AI and less value to some of the things that are easier to do with generative AI. We'll continue to assert the dominance of human intelligence over artificial intelligence. Whether or not that's true. I mean, it's probably true from some perspective.

52:55 Advice for Companies Starting with AI

Raza Habib: Maybe the very last question before we wrap, I think you were one of the earliest adopters of gen AI. You've been in some sense, one of the most successful companies in making useful products with it and blazed a path for others. If you were the CTO or product leader in AI at a company starting their journey today, what advice might you give them? What things should they consider early? How can they maximize their chance of success?

Cai Gogwilt: For a company just starting out today, I would probably look at making a really, really big swing on something. I think there are so many people flocking to generative AI as a disruptive technology that there's not really time to slowly go in a direction and find your way there. I think you really need to commit and say, this is going to be the way. Because if you're wrong about it, then sorry, you can try again in a few years. But if you're right about it and you don't take a big swing or you don't move as fast as you can in that direction with conviction, then there's a good chance someone next to you is doing that same thing or finding that direction and going faster. Much worse than being wrong would be to be right, but not have enough conviction to follow through.

Raza Habib: Interesting. Wow. Okay. Well, on that note, we got deep there right at the end. I just want to say a massive thank you for taking the time to chat with us. It's been a genuinely fascinating conversation. There were so many threads I would love to pull on more, and I really appreciate the time. So thanks very much, Cai.

Cai Gogwilt: Likewise. Yeah. Always enjoy my conversations with you, Raza, and thank you for having me on the show.

Raza Habib: It's been a pleasure.

About the author

- 𝕏@RazRazcle