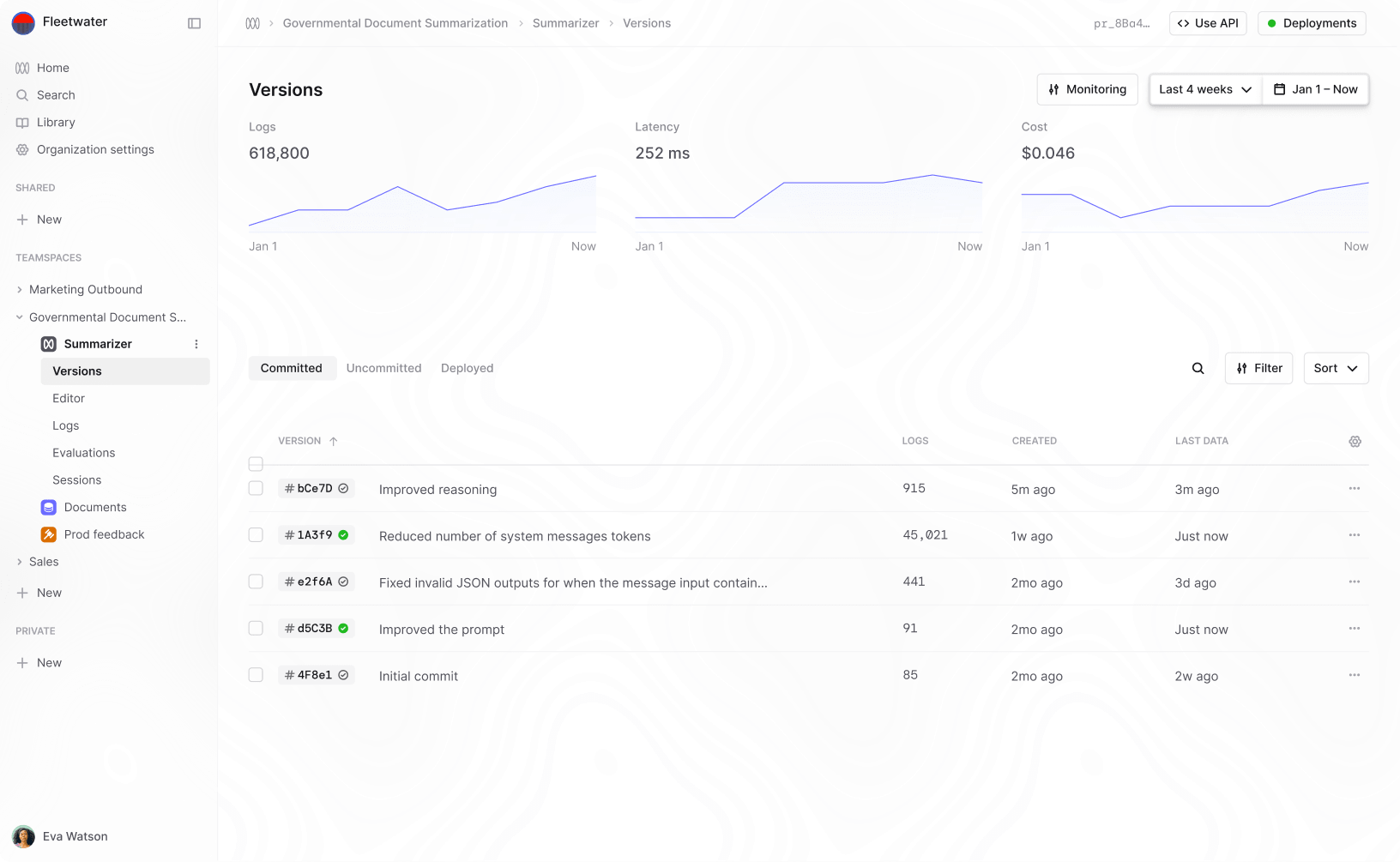

What is an active learning platform?

Active learning platforms are a new kind of tool for machine learning training and deployment. An active learning platform is a single tool that combines data annotation, model training and deployment in a continuous process. In this post I want to explain what an active learning platform is and when you might consider using one.

It started with training data platforms

As machine learning has gone mainstream the demand for annotated training data has skyrocketed. A huge number of data labeling service providers have been created to fill this need. There are outsourced labeling providers like Scale AI, Appen, Cloudfactory and Hive AI. These specialize in high-volume annotation tasks e.g. annotating images for driverless cars.

More recently a new set of labeling tools has emerged to make it easier for companies to annotate data in-house. Their core value of these "training data platforms" is to help companies get high quality data by providing good user interfaces for labeling and quality assurance.

Data labeling platforms still view the machine learning process as linear and waterfall

The standard machine learning workflow is very linear and had multiple waterfall style hand-overs. First data is labeled, then data scientists train models and then models are deployed. Often the deployment is handled by a differnt team entirely. In this workflow it makes sense to have a tool that just does data-labeling but huge opportunities are being missed.

Active learning takes machine learning from waterfall to agile

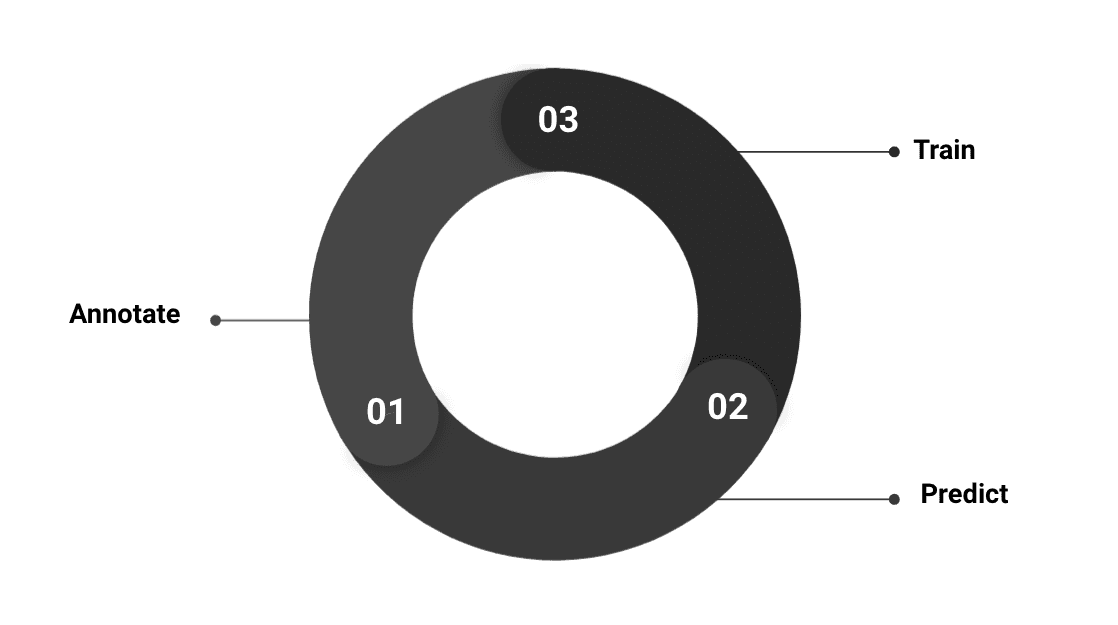

I've written recently about what we've learned from building machine learning tools alongside customers. One of the biggest lessons is that there are huge benefits to be had by combining data annotation and model training in a single closed loop process. Instead of having multiple hand-overs between teams, data scientists and subject-matter experts collaborate in a single agile process.

Active learning is the name given to combining model training and data annotation. The core idea is that as the model learns you can use it to improve the quality of your dataset. The improved data then helps you improve your model in a virtuous cycle.

The most well-known benefit of active learning is that it reduces the volume of data you need but actually there are other key benefits too:

- Machine Learning deployment becomes continuous and seamless In the traditional waterfall machine learning paradigm, deployment is the final step after a model is trained. The problem is that when real world data evolves, models become out of date. When you want to update a model you find yourself having to go back to the start of the whole labeling process. With an active learning platform, your model is hosted and deployed from the very start. You improve your model by continuously adding new data where the model is unsure and so you can avoid the problem of needing to redeploy at all.

- You get much faster feedback on model performance Usually people label their data before they train any models or get any feedback. Often, it takes days or weeks of iterating on annotation guidelines and re-labeling only to discover that model performance falls far short of what is needed, or different labeled data is required. Since Active Learning trains a model frequently during the data labeling process, its possible to get feedback and correct issues that might otherwise be discovered much later.

- Domain experts can collaborate much more easily with data scientists and engineers For a lot of machine learning tasks, domain experts are absolutely critical in getting high value data. For example if you were training a classifier on mammograms then you would likely need a doctor to do the annotation, or for contract classification you may want lawyers. By having an annotation interface that is model backed, the annotators are intimately involved in the actual model training and its easier for the data scientists to improve the quality of their data.

Active Learning Platforms make this easy

Most of our tools and processes for building machine learning models weren't designed with Active Learning in mind. There are often different teams of people responsible for data labeling vs model training but active learning requires these processes to be coupled. If you do get these teams to work together, you still need a lot of infrastructure to connect model training to annotation interfaces. Most of the software libraries being used assume all of your data is labeled before you train a model, so to use active learning you have to write a tonne of boiler plate code. You also need to figure out how best to host your model, have the model communicate with a team of annotators and update itself as it gets data asynchronously from different annotators.

Many of the biggest hurdles to using active learning are just a question of having the right infrastructure. The most advanced teams have pieced together this infrastructure themselves. Tesla very famously built an active learning platform called the "data engine".

Existing data labeling platforms do make it possible to combine modeling and labeling by uploading predictions from your model to assist annotators but the stages still largely happen separately. Using the model to find the most valuable data is left to the end user but is a challenging research area in its own right and something most teams don't have time for.

Active learning platforms set up the infrastructure for you and let you focus on your domain. They continuously update as you label, frequently and automatically retrain a model when new data points arrive. They also have built in methods for finding the highest value data to annotate and improve model performance.

At Humanloop, we've built an active learning platform for NLP. If you'd like to find out more we'd love to chat and give you a demo.

About the author

- 𝕏@RazRazcle